Written by:

Elena Louder

Carina Wyborn

Chris Cvitanovic

Angela Bednarek

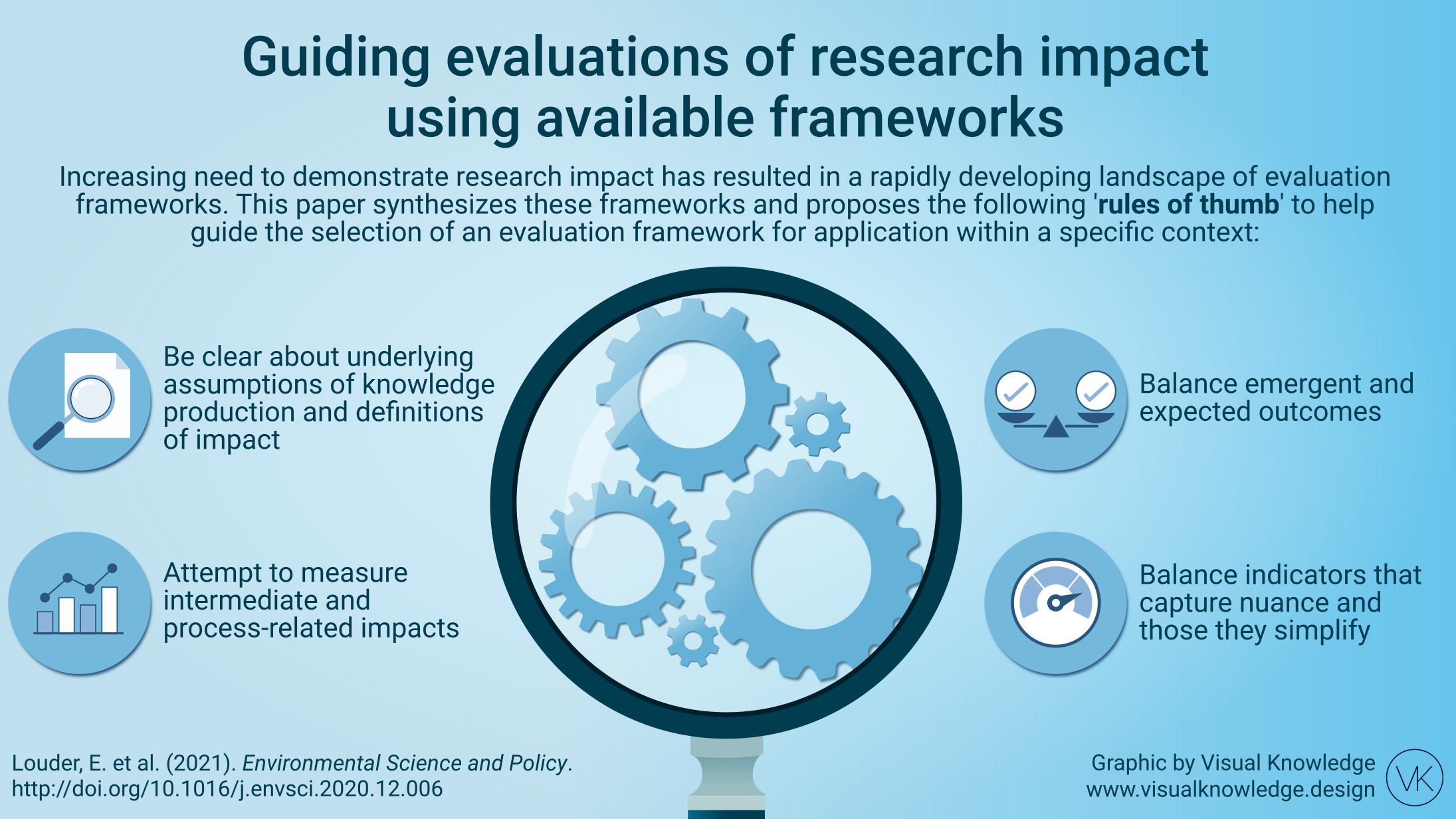

Evaluating the impacts of science on policy and practice is inherently challenging. Impacts can take a variety of forms, occur over protracted timeframes and often involve subtle and hard-to-track changes. In response to these challenges, there has been an increased effort among academics and practitioners across disciplines to develop more useful frameworks to guide the evaluation of impacts at the intersection of science, policy, and practice.

These frameworks have emerged from different domains and disciplines, are framed and described using complementary but often different terminology, and approach evaluation from different founding assumptions. In this rapidly developing field, it can be hard to make sense of them, and to know what works in what contexts and why.

In a recent paper we sought to help overcome this challenge by undertaking a synthesis of the frameworks that are currently available for guiding the evaluation of impacts at the interface of environmental science and policy, examining core assumptions, similarities and differences.

We found that the differences in evaluation frameworks can often be traced back to the underlying epistemology of a specific research project. Our review surfaced a spectrum of understandings of knowledge, ranging from more positivistic epistemologies, where knowledge is certain, fixed, and able to be passed along through the push and pull of knowledge needs and supply, to more constructivist epistemologies, where knowledge is conceptualized as always mediated through culture, worldviews, and co-created through various subjectivities. Constructivist definitions of knowledge discuss how sustainability science is primarily not about delivering new information to decision-makers, rather, it is about opening up and reframing problems and possibilities. In this understanding, work at the science policy interface is not aiming to provide lacking information or knowledge, but to co-create new understandings. These various understandings of knowledge then shape what is meant by impact.

From our analysis of this diverse literature, we identify four ‘rules of thumb’ to help guide the selection of evaluation frameworks for application within a specific context.

Four ‘Rules of Thumb’ for selecting evaluation frameworks

Elena Louder is a PhD student in the department of Geography, Development and Environment at the University of Arizona, Tucson, USA. Her research interests include political ecology, the politics of renewable energy development, knowledge co-production, and biodiversity conservation.

Carina Wyborn is an interdisciplinary social scientist with background in science and technology studies, and human ecology. She works on the science and politics of environmental futures at the Institute for Water Futures, Australian National University (ANU).

Chris Cvitanovic is a transdisciplinary marine scientist working to improve the relationship between science, policy and practice at the Australian National University (ANU)

Angela Bednarek is Project Director of Environmental Science at Pew Charitable Trusts. She develops strategy to develop, support, and communicate scientific research to explain emerging issues, inform policy, and advance solutions to conservation problems.

Enter your name and email address to join the Transforming Evidence email list. You will receive a confirmation email — please click the link in it to complete your subscription.

You can unsubscribe at any time via the Transforming Evidence list page.

You can unsubscribe at any time via the Transforming Evidence list page.